Cluster Architecture Overview

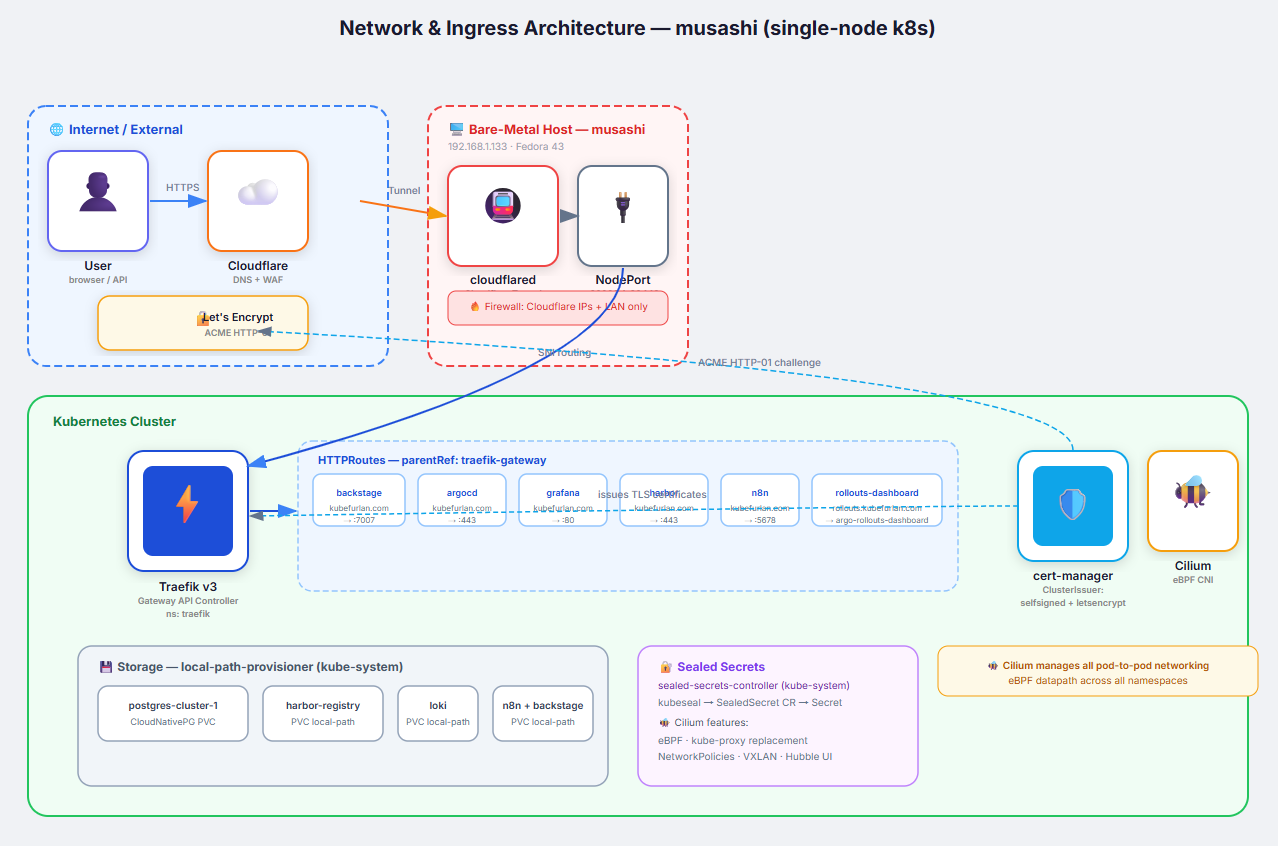

This homelab runs a single-node, production-grade Kubernetes cluster on bare metal. The goal is to mirror real-world platform engineering practices — GitOps, observability, automated deployments, and secure secret management — in a resource-constrained environment.

Hardware

Section titled “Hardware”The entire cluster runs on a single machine, musashi:

| Attribute | Value |

|---|---|

| CPU | AMD Ryzen 7 |

| RAM | 16 GB DDR5 |

| Storage | 1 TB SSD |

| OS | Fedora Linux 43 (Workstation Edition) |

| IP Address | 192.168.1.133 |

Running a full Kubernetes stack on a single node is a deliberate design choice for this homelab: it forces efficient resource management and makes the infrastructure cheap enough to run 24/7, while still exercising every component a multi-node production cluster would have.

Kubernetes Distribution

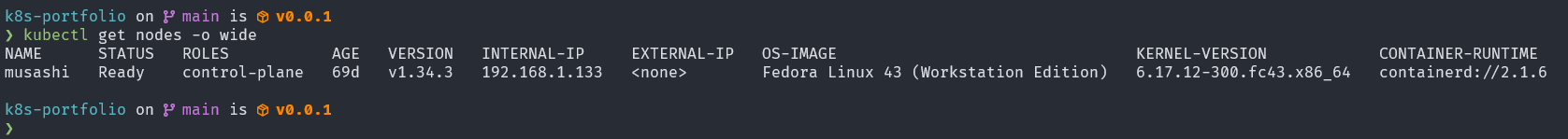

Section titled “Kubernetes Distribution”The cluster was bootstrapped with kubeadm and runs Kubernetes v1.34.3 with containerd v2.1.6 as the container runtime.

NAME STATUS ROLES AGE VERSION INTERNAL-IP OS-IMAGEmusashi Ready control-plane 69d v1.34.3 192.168.1.133 Fedora Linux 43kubeadm gives full control over the cluster configuration. Unlike k3s or microk8s, every component (etcd, kube-apiserver, kube-scheduler, kube-controller-manager) runs as a standard Kubernetes pod — which is ideal for learning operational patterns that apply directly to production kubeadm clusters.

Networking — Cilium CNI

Section titled “Networking — Cilium CNI”The CNI plugin is Cilium, running as a DaemonSet in kube-system. Cilium handles pod networking and NetworkPolicy enforcement; standard kube-proxy is used for service routing. Cilium was chosen for:

- NetworkPolicy enforcement — enforces standard

NetworkPolicyCRDs and extends them withCiliumNetworkPolicyfor L7-aware rules - eBPF-based packet processing — efficient kernel-level dataplane for pod-to-pod traffic

- Operational maturity — well-established in production Kubernetes environments

kube-system cilium DaemonSet 1/1 Running 69dkube-system cilium-operator Deployment 1/1 Running 18dIngress — Traefik + Gateway API

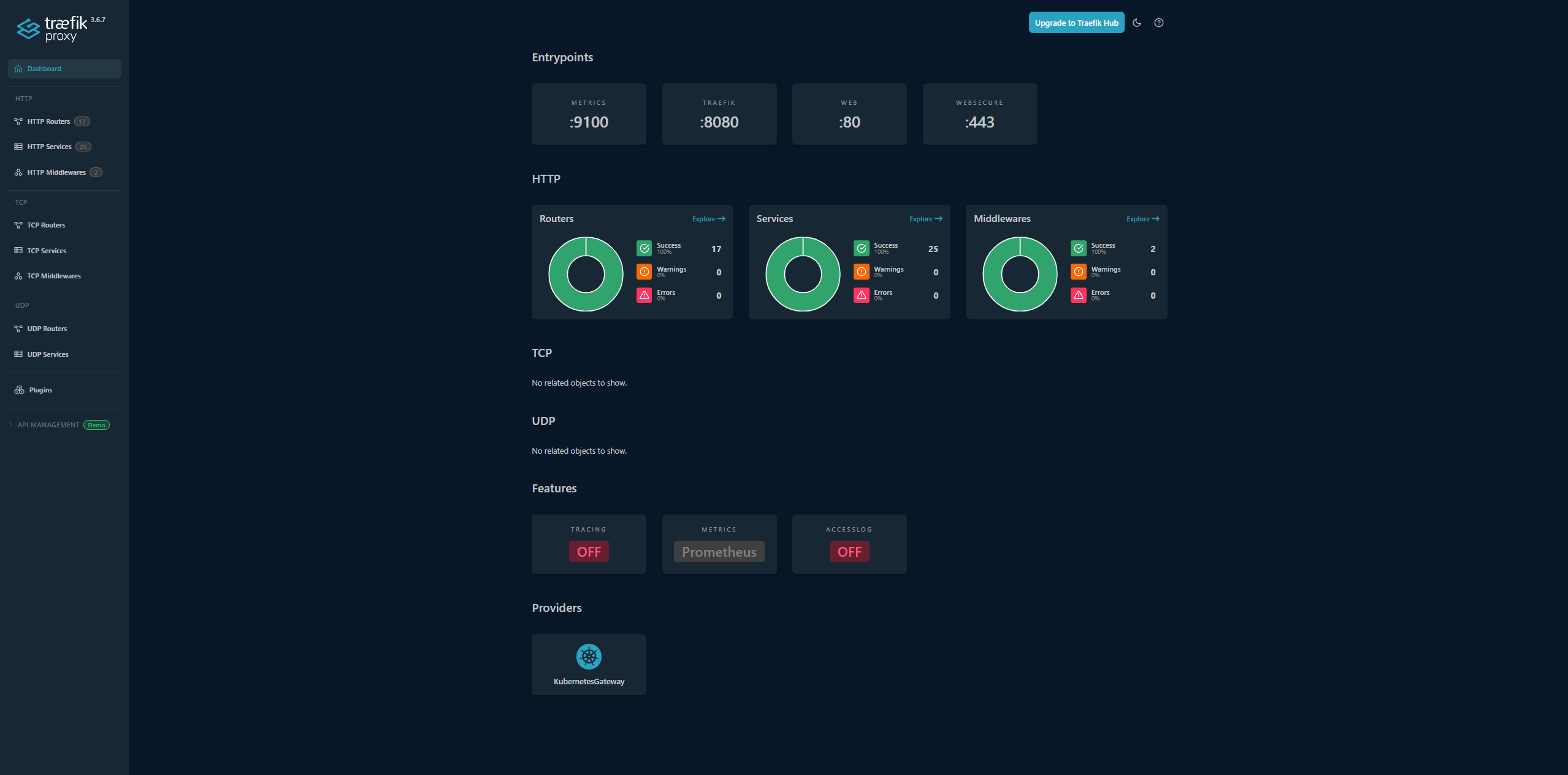

Section titled “Ingress — Traefik + Gateway API”Layer 7 routing is handled by Traefik running in the traefik namespace, exposed as a NodePort service:

| Port | Protocol | NodePort |

|---|---|---|

| 80 | HTTP | 30080 |

| 443 | HTTPS | 30443 |

| 8080 | Dashboard | 30081 |

Traefik implements the Kubernetes Gateway API (gateway.networking.k8s.io/v1) rather than legacy Ingress objects. All application routing is declared as HTTPRoute resources, which is the modern, vendor-neutral standard:

# Example HTTPRoute for ArgoCDapiVersion: gateway.networking.k8s.io/v1kind: HTTPRoutemetadata: name: argocd-route namespace: argocdspec: hostnames: - argocd.kubefurlan.com parentRefs: - name: traefik-gateway namespace: traefikExternal access to cluster services uses a Cloudflare Tunnel (cloudflared) running as a systemd user service on the host. The tunnel terminates TLS at Cloudflare’s edge and forwards traffic to Traefik’s ClusterIP. This avoids opening inbound ports on the home router.

Storage — local-path Provisioner

Section titled “Storage — local-path Provisioner”Persistent volumes are provided by the local-path-provisioner running in local-path-storage. It dynamically provisions hostPath-backed PVCs under /opt/local-path-provisioner/.

This is appropriate for a single-node homelab:

- Zero overhead (no distributed storage layer)

- Instant provisioning

- Data lives on the fast local SSD

The limitation — no replication — is mitigated by the multi-layer backup strategy.

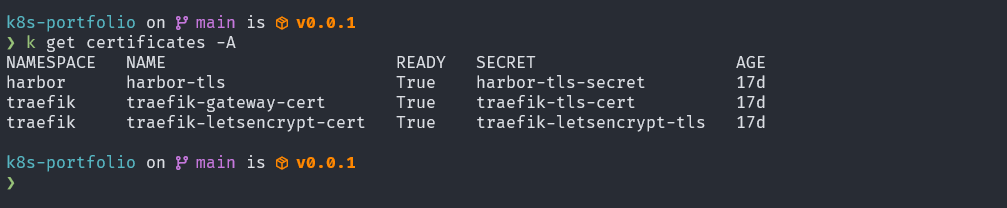

Certificate Management — cert-manager

Section titled “Certificate Management — cert-manager”TLS certificates are managed by cert-manager in the cert-manager namespace:

cert-manager cert-manager-77f7698dcf 1/1 Running 19dcert-manager cert-manager-cainjector 1/1 Running 19dcert-manager cert-manager-webhook 1/1 Running 19dTwo ClusterIssuer resources are configured:

| Issuer | Type | Use |

|---|---|---|

letsencrypt-prod | ACME / Cloudflare DNS-01 | Production TLS for *.kubefurlan.com |

selfsigned-issuer | Self-signed | Internal services, Harbor registry |

Active certificates:

| Certificate | Namespace | Status |

|---|---|---|

harbor-tls | harbor | Ready |

traefik-gateway-cert | traefik | Ready |

traefik-letsencrypt-cert | traefik | Ready |

Conceptual Architecture Diagram

Section titled “Conceptual Architecture Diagram”

All Running Workloads

Section titled “All Running Workloads”Grouped by function:

CI/CD & GitOps

Section titled “CI/CD & GitOps”| Namespace | Workload | Purpose |

|---|---|---|

argocd | argocd-server, application-controller, repo-server, dex-server, redis | ArgoCD GitOps controller |

argo-rollouts | argo-rollouts, argo-rollouts-dashboard | Progressive delivery (canary deployments) |

Monitoring & Observability

Section titled “Monitoring & Observability”| Namespace | Workload | Purpose |

|---|---|---|

monitoring | prometheus-kube-prometheus-stack-prometheus-0 | Metrics storage and query |

monitoring | kube-prometheus-stack-grafana | Dashboards and visualization |

monitoring | alertmanager-kube-prometheus-stack-alertmanager-0 | Alert routing (Telegram + Gmail) |

monitoring | kube-prometheus-stack-kube-state-metrics | Kubernetes object metrics |

monitoring | kube-prometheus-stack-prometheus-node-exporter | Host metrics (CPU, memory, disk) |

monitoring | alloy (DaemonSet) | Log collection (pods + systemd journal) |

monitoring | loki-0 | Log storage |

monitoring | prometheus-blackbox-exporter | HTTP endpoint probing |

monitoring | prometheus-pushgateway | Batch job metrics (restic, pg_dump) |

Security & Infrastructure

Section titled “Security & Infrastructure”| Namespace | Workload | Purpose |

|---|---|---|

cert-manager | cert-manager, cainjector, webhook | TLS certificate automation |

kube-system | sealed-secrets-controller | Encrypted Kubernetes secrets |

harbor | harbor-core, registry, trivy, jobservice, portal | Private container registry + vulnerability scanning |

kube-system | cilium (DaemonSet), cilium-operator | Pod networking (CNI) and NetworkPolicy enforcement |

Data Platform

Section titled “Data Platform”| Namespace | Workload | Purpose |

|---|---|---|

postgres | postgres-cluster-1 | CloudNativePG managed PostgreSQL |

cnpg-system | cnpg-controller-manager | CloudNativePG operator |

Backup

Section titled “Backup”| Namespace | Workload | Purpose |

|---|---|---|

velero | velero | Kubernetes resource backup |

velero | node-agent (DaemonSet) | Kopia-based volume backup |

Applications

Section titled “Applications”| Namespace | Workload | Purpose |

|---|---|---|

backstage | backstage | Internal Developer Portal (IDP) |

n8n | n8n | Workflow automation |

miniflux | miniflux | RSS feed reader |